Important Stuff

Build:

catkin build

Source:

source ~/.bashrc

source ~/catkin_ws/devel/setup.bash

Create Package:

catkin_create_pkg <package name> <dependency 1> <dependency 2>

(From catkin_ws/src dir)

Go to where package is defined

roscd

Core:

Ros 1 is based on a single master node to control everything. Without this nothing will work. Normally auto started from a launch file. If its not started we can with

roscore

Launch:

roslaunch <package> <launch file>

A launch file is a xml file in the launch folder that defines a few things to launch

Example:

<launch>

<node pkg="com2009_team17" type="avoidance_server.py" name="move_server" output="screen">

</node>

<node pkg="com2009_team17" type="exploration_client.py" name="exploration_client" output="screen">

</node>

</launch>

(task2.launch)

Run:

rosrun <package> <exectuable file>

Launches a file marked as executable in the src folder of a package

Sub folder invariant (for some reason)

(chmod +x file.py)

Note: shebang is normally needed to tell os what to run this file with

#!/usr/bin/env python3

Nodes:

Overall explanation https://wiki.ros.org/ROS/Patterns/Communication#Communication_via_Topics_vs_Services_vs_X

rosnode list

List all available nodes

Nodes are things running that can pub and sub

Topics:

A message that is sent between nodes is put into a topic

For example the cmd_vel topic is for sending messages that control the robot’s speed

rostopic info /cmd_vel

rostopic list

rostopic echo /chatter

rostopic pub /cmd_vel geometry_msgs/Twist[SPACE][TAB]

(to autofill)

Types:

Each message is formatted to a type Get information from rosmsg info

rosmsg info geometry_msgs/Twist

Custom types are .msg files in the msg folder

Services:

Request -> Response between specific nodes

One server, many clients

Synchronous: Has to wait for response from server before client can continue

To call (and autofill)

rosservice call /move_service[SPACE][TAB]

Info

rosservice info /move_service:

rosmsg but for service:

rossrv info tuos_msgs/SetBool

Request/response params seperated by a ---

Custom types are in srv folder (and, again, are .srv files)

Import into python:

from tuos_msgs.srv import Approach, ApproachResponse, ApproachRequest

# Service

self.service = rospy.Service(service_name, Approach, self.srv_callback)

def srv_callback(self, request_from_client):

return ApproachResponse()

# Client

service = rospy.ServiceProxy(service_name, Approach)

request_to_server = ApproachRequest()

request_to_server.request_signal = True

response_from_server = service(request_to_server)

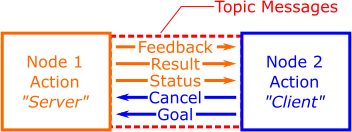

Actions:

Service but with feedback

Works using the standard pub sub commands with topics file

/camera_sweep_action_server/cancel

/camera_sweep_action_server/feedback

/camera_sweep_action_server/goal

/camera_sweep_action_server/result

/camera_sweep_action_server/status

Pub to goal to start

Sub to feedback to get the status

Define action file to set custom message types:

CameraSweep.action (in action folder, predictably)

#goal

float32 sweep_angle # the angular sweep over which to capture images (degrees)

int32 image_count # the number of images to capture during the sweep

---

#result

string image_path # The filesystem location of the captured images

---

#feedback

int32 current_image # the number of images taken

float32 current_angle # the current angular position of the robot (degrees)

Status List

PENDING=0

ACTIVE=1

PREEMPTED=2

SUCCEEDED=3

ABORTED=4

REJECTED=5

Import custom actions (this is from file move.action)

from com2009_team17.msg import MoveAction, MoveGoal, MoveFeedback,MoveResult

# Server

self.actionserver = actionlib.SimpleActionServer(self.server_name,

MoveAction, self.action_server_launcher, auto_start=False)

# Client

self.client = actionlib.SimpleActionClient(action_server_name, MoveAction)

self.client.send_goal(self.goal, feedback_cb=self.feedback_callback, done_cb=self.done_callback)

Open CV:

Python image library

Need CvBridge to convert ros raw data to images

Crop image

cropped_img = cv_img[

crop_z0:crop_z0+crop_height,

crop_y0:crop_y0+crop_width

]

View hue/saturation graph

rosrun tuos_examples image_colours.py step2_cropping.jpg

We can create a mask to filter color values

We can find the centre of a detected area

https://theailearner.com/tag/image-moments-opencv-python/

M00: the sum of all non-zero pixels in the image mask (i.e. the size of the colour blob, in pixels)M10: the sum of all the non-zero pixels in the horizontal (y) axis, weighted by row numberM01: the sum of all the non-zero pixels in the vertical (z) axis, weighted by column number

m = cv2.moments(img_mask)

cy = m['m10']/(m['m00']+1e-5)

cz = m['m01']/(m['m00']+1e-5)

cv2 save image:

full_image_path = base_image_path.joinpath(f"lol.jpg")

cv2.imwrite(str(full_image_path), cropped_img)

cv2 display filtered image (with circle):

hsv_img = cv2.cvtColor(cropped_img, cv2.COLOR_BGR2HSV)

lower_threshold = (125, 100, 0)

upper_threshold = (200, 255, 255)

img_mask = cv2.inRange(hsv_img, lower_threshold, upper_threshold)

filtered_img = cv2.bitwise_and(cropped_img, cropped_img, mask = img_mask)

cv2.circle(

filtered_img,

(int(cy), int(cz)),

10, (0, 0, 255), 2)

cv2.imshow("hehe", filtered_img)

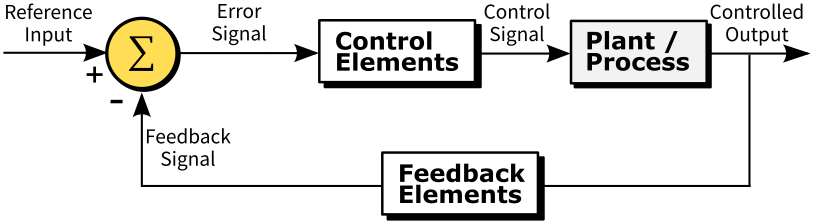

PID Control:

We can just use P or PI lol

Example for line following of filtered image:

kp = -0.03

reference_input = crop_width/2

feedback_signal = cy

error = feedback_signal - reference_input

ang_vel = kp * error

(then we can put ang_vel to robot)

Odometry

nav_msgs/Odometry contains 2 main elements

Pose is relative position/orientation from a set (usually starting) point. It is calculated from Inertial Measurement Board (on OpenCR board) as well as data from the wheel encoders

Twist is current linear/angular velocity (from just wheel encoders)

Orientation/Ang Vel is in quaternion form, convert to standard euler angles with:

from tf.transformations import euler_from_quaternion

(roll, pitch, yaw) = euler_from_quaternion([orientation.x,

orientation.y, orientation.z, orientation.w],

'sxyz')

Since this is a 2D wheeled robot we only care about X Y and yaw

Exact Examples of Commands

rosrun turtlebot3_teleop turtlebot3_teleop_key

Control robot with keys

rosrun rqt_graph rqt_graph

View node graph

roslaunch tuos_simulations rviz.launch

RViz

roslaunch turtlebot3_slam turtlebot3_slam.launch

RViz with SLAM stuff running

(rosrun map_server map_saver -f {map name})

Get map from running SLAM stuff

(eog {map name}.pgm)

Image viewer

catkin_create_pkg part1_pubsub rospy std_msgs

Create a basic package with ropy and standard messages

gzclient

Start gazebo if its been previously running headless

<command> | grep <something>

Filter the result of “command” to only lines that contain “something”

rosrun rqt_image_view rqt_image_view

RQT image view (live feed from cameras)

rostopic echo /chatter -nX

Echo the next X messages published to the ROS topic

rostopic echo /chatter -c

Clear the screen after a message is received (so it looks nicer)

cat ~/bash_alias

View the command aliases on a tuos ros system